|

ALICE A LSTM-Inspired Composition Experiment

|

|

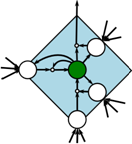

About Alice Alice is a computer program which is able to learn to compose music by repeatedly „listening“ to a selection of pieces of music. Alice has no or only little prior knowledge of music and tries to successively learn the inherent structures of the presented pieces of music. Alice has no knowledge of high-level structures of common Western music like scales, relationships of chords or concepts like dominant, subdominant and tonic. Alice is driven by a recurrent neural network which is a paradigm inspired by the functioning of the human brain. A special kind of network cells, the Long Short-term Memory (LSTM) cells, improve Alices capability to learn dependencies in music that span over long time.

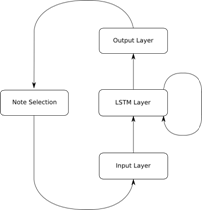

How can a computer compose music? Technically speaking, the neural network within Alice is performing a prediction task. Music is regarded either as a sequence of notes or as a sequence of timesteps. Accordingly, each timestep has an association of none, one or several notes that are sounding. The composition task consists of the succesive prediction of next note(s) given the notes composed/predicted so far. Composition can start off with an empty context or with a given melody that is to be completed. ALICE incorporates a probabilistic approach for prediction. Instead of predicting the next note/timestep, a probability distribution over the next note/timestep is predicted. The next note/timestep is then drawn according to this distribution. Thus the composition process is working in a linear fashion from left to right and might more accurately be called improvisation process. The probabilistic approach enables ALICE - once trained - to compose an unlimited amount of new pieces.

Mode of composition/improvisation In the better part of most experiments, ALICE was set up to compose a new melody (given an empty context or an initial note) to a given sequence of chords. This is analogue to ALICE writing a melody or a solo to given chord changes. Of course it is possible to allow ALICE to create the whole song including the chord changes altogether. However, the emphasis of this project lied on the generation of pleasing rhythmical structures and melodies. Furthermore a certain setup was kept in mind. ALICE should eventually be able to perform together with a human performer. Therefore the music is regarded as having two components: chord changes and melody. This allows the following live setup: While a human performer plays chord changes, ALICE can — once trained— indeed improvise a melody in realtime.

Does that really work? The answer is up to you. Listen to some pieces of ALICE and judge yourself. There is of course much room for improvement but I am very pleased with the results of ALICE, taking into account that my first composition experiments with artificial neural networks even failed in producing a steady rhythm and melodies appeared to be totally random. Anyhow, ALICE succeeds in learning phrases of the pieces used for training and recombines them in an appropriate way. Furthermore, she varies phrases and adds new phrases. Naturally, the success of the training phase depends heavily on the set of training pieces.

What is the point of having a computer composing music? I will answer this question only briefly though it offers a colourful variety of answers. I am a musician myself and I find music both a very structured, tangible and very emotional, intangible phenomenon. Fascinatingly, a human can understand/feel music without explicitly knowing about the underlying structure, about intervals, scales or rules of voice leading. So I wondered whether a computer programme might be capable of discovering underlying structure of sets of musical pieces. Certainly, I do not want to replace human composers and don‘t think that this is really possible. Human compositions are influenced by the experience, thoughts and wisdom of the composer which a program (yet) cannot grasp by having only auditory sensors and such a short life-span.

I want more technical details! How is ALICE implemented? ALICE‘s frontend is written in JAVA. The core that is handling the neural network is implented in C++ and is hooked to JAVA via the JAVA native interface (JNI). This maintains fast code execution and the platform-independence. ALICE was successfully tested on Windows and Linux platforms. Please feel free to contact me for further details and discussions.

About the Author ALICE was created by Andreas Brandmaier as thesis (Diplomarbeit) of the Computer Science programme of the Technische Universität München under supervision of Prof. Dr. rer. nat. habil. Jürgen Schmidhuber and guidance of Dipl.-Inf. Christian Osendorfer. The author currently works at the Max-Planck-Institute for Human Development in Berlin. You can contact the author via email: alice@brandmaier.de)

|

|

Computational Creativity — Automatic Music Composition — Recurrent Neural Networks– LSTM — Composition by Predicition — Computer Music — Machine Learning—Music Creativity |

|

Listen to ALICE‘s music

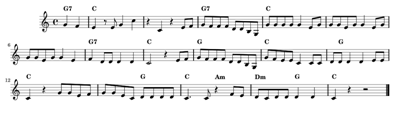

The following song was composed after having trained ALICE on a set of German folk songs. The result is a new song in an undoubtely similar style. |

|

Related Literature

· Todd, Peter M. (1989), A Connectionist Approach to Algorithmic Composition · Hochreiter Sepp and Schmidhuber, Jürgen (1997), Long short-term memory, Neural computation, MIT Press · Eck , Douglas and Schmidhuber, Jürgen (2002), Finding temporal structure in music: Blues improvisation with LSTM recurrent networks ,Artificial Neural Networks, ICANN 2002 · Mozer, Mike (1994), Neural Network Music Composition by Prediction: Exploring the Benefits of Psychoacoustic Constraints and Multi-Scale Processing, · Cope, David (2006), Computer Models of Musical Creativity, Cambridge, MA: MIT Press

|

|

Fig.3: A simple tune after training ALICE with German folk songs. |

|

Fig.1: The high-level structure of. The recurrent neural network within ALICE. The network comprises three layers. The LSTM layer is a complex, fully connected layer. The output layer represents a probability distribution from which the next note/timestep is drawn successively and fed back to the network‘s input. |

|

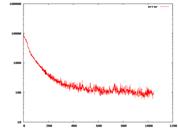

Fig 2.: The error rate during ALICE‘s training phase. After a certain threshold is reached, training is stopped and the compositon phase is started. |

|

© Andreas Brandmaier, email: alice@brandmaier.de, Berlin, 2008

|

|

Keywords: Symbolic systems of music representation, music representation, artificial intelligence, computational creativity, automatic composition, music creativity, composition, composition by prediction, lstm, long-term short-term memory, connectionism, neural networks, artificial neural networks, music composition, prediction by memory, Musical creativity, computational modeling |

|

If you liked this project, please also visit ELIAS, Alice‘s little brother: |